AI-Powered Legal Document Review: What Works and What Doesn't

AI-powered legal document review: how to optimize processes and avoid common errors in contracts and due diligence.

AI-Powered Legal Document Review: What Works and What Doesn't

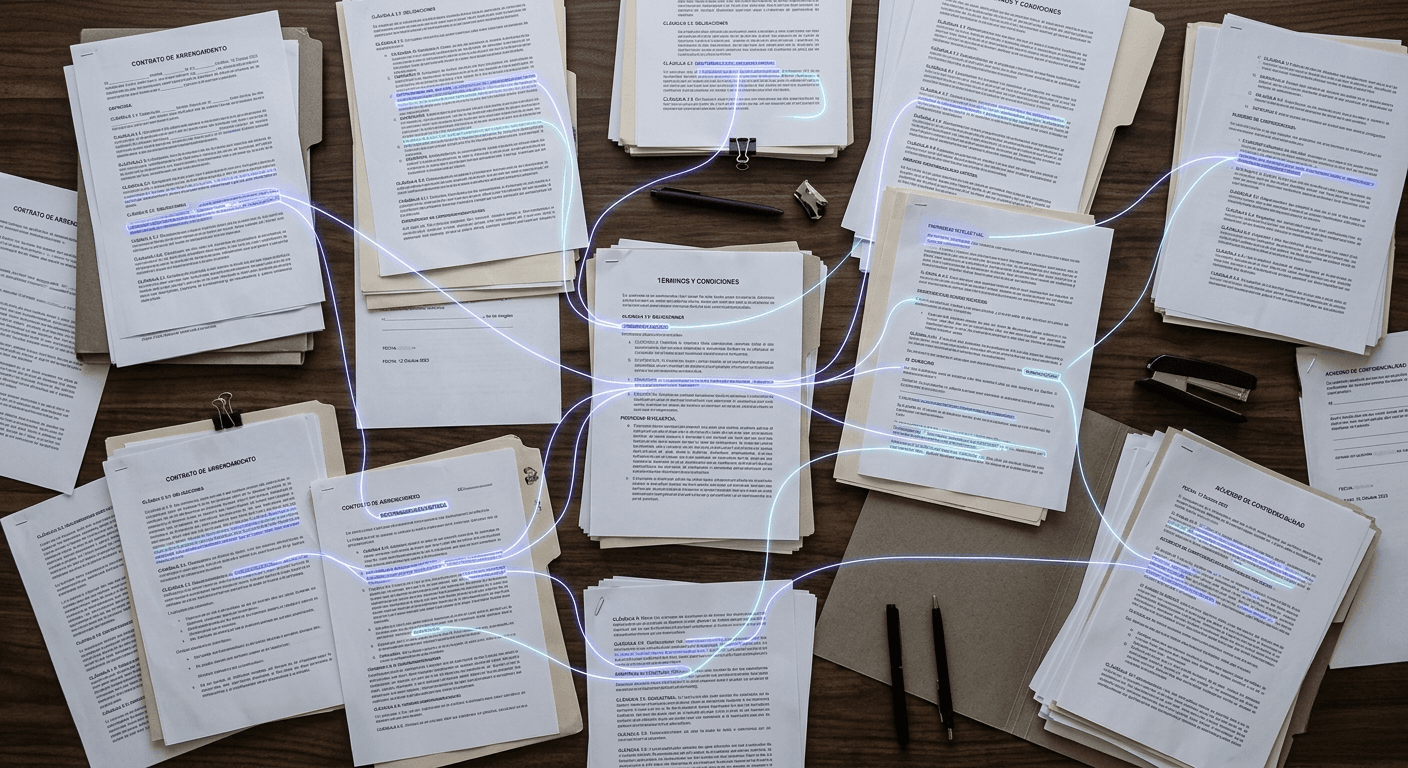

The review of legal documents is one of the most time-consuming tasks in a law firm or legal department. In M&A operations, complex litigation, or compliance processes, entire teams of lawyers spend weeks reviewing contracts, identifying risk clauses, and classifying files. It's necessary work, but a good portion is mechanical, repetitive, and prone to errors due to fatigue.

The AI applied to document review promises to change these dynamics. And to a large extent, it's already doing so. But not in the way many imagine.

When we talk about document review with AI, we're not referring to asking a chatbot to summarize a contract. We're talking about specialized systems, trained to recognize the structure of legal documents. Tools capable of identifying clauses, obligations, dates, parties, non-standard terms, and absences within contracts, due diligence packages, or litigation files. The difference between a chatbot and a specialized document review tool is the same as between an internet search engine and a case management system.

What AI Does Well

There are three areas where the results are backed by evidence.

Processing volumes that a human team cannot cover on time. In an M&A operation with thousands of contracts in a data room, traditional manual review only covers between 5% and 10% of the documents. Not because teams don't want to review more, but because there's no time or budget. An AI system can analyze 100% of the contractual universe, which changes the math of risk. Problems that aren't detected before closing are the ones that generate costs afterward.

Maintaining consistency throughout the entire process. A lawyer who has been reviewing documents for hours doesn't apply the same criteria in the last contract as in the first. It's a documented effect of cognitive fatigue. AI tools apply the same classification parameters consistently, regardless of volume or time of day.

A landmark study, led by academics from Stanford and the University of Southern California, pitted 20 experienced corporate lawyers against an AI system in reviewing confidentiality agreements. The AI achieved 94% accuracy compared to the lawyers' average of 85%. And it completed the task in 26 seconds versus the professionals' average of 92 minutes.

Detecting patterns and connections between documents. A clause that seems harmless in isolation can represent a material risk when crossed with another clause in another contract in the same data room. A human can detect these connections, but needs time and cognitive capacity to do so. In most operations, both resources are scarce.

According to available data, specialized tools can reduce review time by 70% to 80%. And document review represents more than 80% of total litigation spending, about $42 billion annually in the U.S. alone, according to the American Bar Association.

What AI Cannot Do (and Probably Shouldn't Try)

AI can identify that a contract contains a change of control clause that deviates from the standard. What it cannot do is assess whether that deviation matters in the context of that specific operation, with that buyer, in that jurisdiction, and under those business circumstances. That requires legal judgment, client knowledge, and professional experience.

It also cannot negotiate, interpret the parties' intent, or explain to a board of directors why they should or shouldn't accept certain conditions.

And there's a real risk that shouldn't be minimized. As of January 2026, more than 1,150 cases of AI-generated content with fake citations or invented jurisprudence have been documented in judicial proceedings, according to the Sterne Kessler database. The rate of incidents has gone from 2 per week to 2-3 per day. This doesn't invalidate the technology, but underscores that human supervision isn't optional. It's part of the design.

What Matters Before Evaluating Tools

Before looking for providers or testing demos, there are three questions any executive should answer:

-

What types of documents do we review most frequently and in what volume? A system trained for commercial contracts in common law can fail with lease contracts in Spanish law. The specificity of training determines the quality of the result.

-

How many weekly hours does my team spend on document review that could be assisted? If the answer is "few," maybe this isn't the priority. If the answer is "many," the potential impact is high.

-

Do we have a defined human supervision process for AI-generated outputs? If it doesn't exist, it doesn't matter what tool you choose. Without supervision, risk is transferred rather than eliminated.

Document review with AI works. But it works when applied to the right type of documents, in the appropriate context, and with professional supervision. Technology doesn't replace a lawyer's judgment. What it does is free up time so that judgment can be applied where it really makes the difference.

Sources:

- LawGeex & Stanford Program in Law, Science and Technology, Comparing the Performance of Artificial Intelligence to Human Lawyers in the Review of Standard Business Contracts (2018).

- Sterne Kessler, AI Hallucination in Litigation Database (January 2026).

- American Bar Association, Legal Technology Survey Report (2025).

- Herbert Smith Freehills, AI-Augmented Due Diligence: Global M&A Outlook 2026 (March 2026).

- Justee, AI Legal Document Review: Beyond Contracts in 2026 (March 2026).